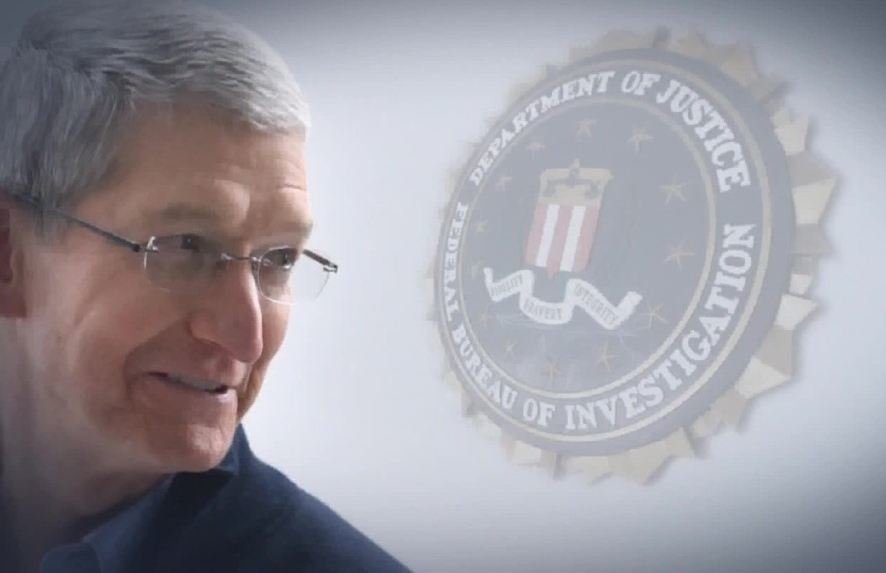

The FBI Says Apple Cares More About Its Reputation Than Fighting Terrorism

The FBI has accused Apple of prioritising its reputation above public safety, after the company refused to unlock the iPhone of a terrorist gunman.

Investigators in the US want to access data stored on the iPhone that belonged to Syed Farook. In December, with his wife Tashfeen Malik, Farook killed 14 people in San Bernardino, California.

The US Department of Justice has filed a court order to force Apple to build new software that can crack the iPhone’s encryption. It says that the investigation into the murders is being hindered by not having access to Farook’s data. In particular, officials are still trying to determine to what extent the couple had been radicalised by Islamist groups such as ISIS, and whether they had contacted other terrorists.

Apple said it will fight the order, calling it “chilling”. Its CEO Tim Cook explained the company’s position in a letter to customers (www.apple.com/customer-letter). He warned that the software could be used to “intercept your messages, access your health records or financial data, track your location, or even access your phone’s microphone or camera”.

Cook said that the FBI wants Apple to build a “backdoor” to decrypt the iPhone’s operating system (iOS), something that, while technically feasible, is “too dangerous to create”.

Attorneys for the Justice Department said that Apple’s stance seems “to be based on concern for its business model and brand marketing strategy”. They think it’s just a PR stunt to portray Apple as the defender of public privacy.

Apple itself has admitted the risk of “reputational harm”. During a separate US court case in October last year, Apple said that being forced to unlock iPhones and extract data would “threaten the trust between Apple and its customers and substantially tarnish the Apple brand”.

Yet Apple hasn’t always been so uncooperative. Prosecutors in the case last October pointed out that Apple had previously agreed to extract customer data from iPhones more than 70 times following requests from US investigators.

But there was a crucial difference in these cases. It involved handing over data that Apple could extract while the iPhone was locked. This meant Apple didn’t need to create the backdoor decryption software the FBI is now calling for.

Privacy campaigners say such a tool could be used to unlock anyone’s iPhone. Cook emphasised this danger in his letter: “In the physical world, it would be the equivalent of a master key, capable of opening hundreds of millions of locks — from restaurants and banks to stores and homes”.

But the FBI insists that the tool would be used only once – to unlock Farook’s phone. Apple could guarantee this, says one encryption expert. Dan Guido, from security company Trail of Bits, suggested on his blog that once Apple built the backdoor tool for iOS, the FBI should send Farook’s iPhone to the company for examination. This would mean that the “customized version of iOS never physically leaves the Apple campus”, and so never gets used on another iPhone.

Apple is playing a dangerous game. If, as Guido claims, Farook’s phone can be unlocked without it leading to further invasions of privacy, then many people will wonder why the company is making such a big deal about the principle involved. The longer Apple refuses to help, the more its position could seem like arrogant posturing.